The dark reality of Meta’s AI glasses for women

Women are already reporting how the technology is being misused

Meta’s AI glasses are being marketed as the next step in wearable technology, a hands-free way to capture content, access information and essentially interact with the world. But as they become more widely used, concerns about how they are already being misused are growing - particularly when it comes to women’s safety and privacy.

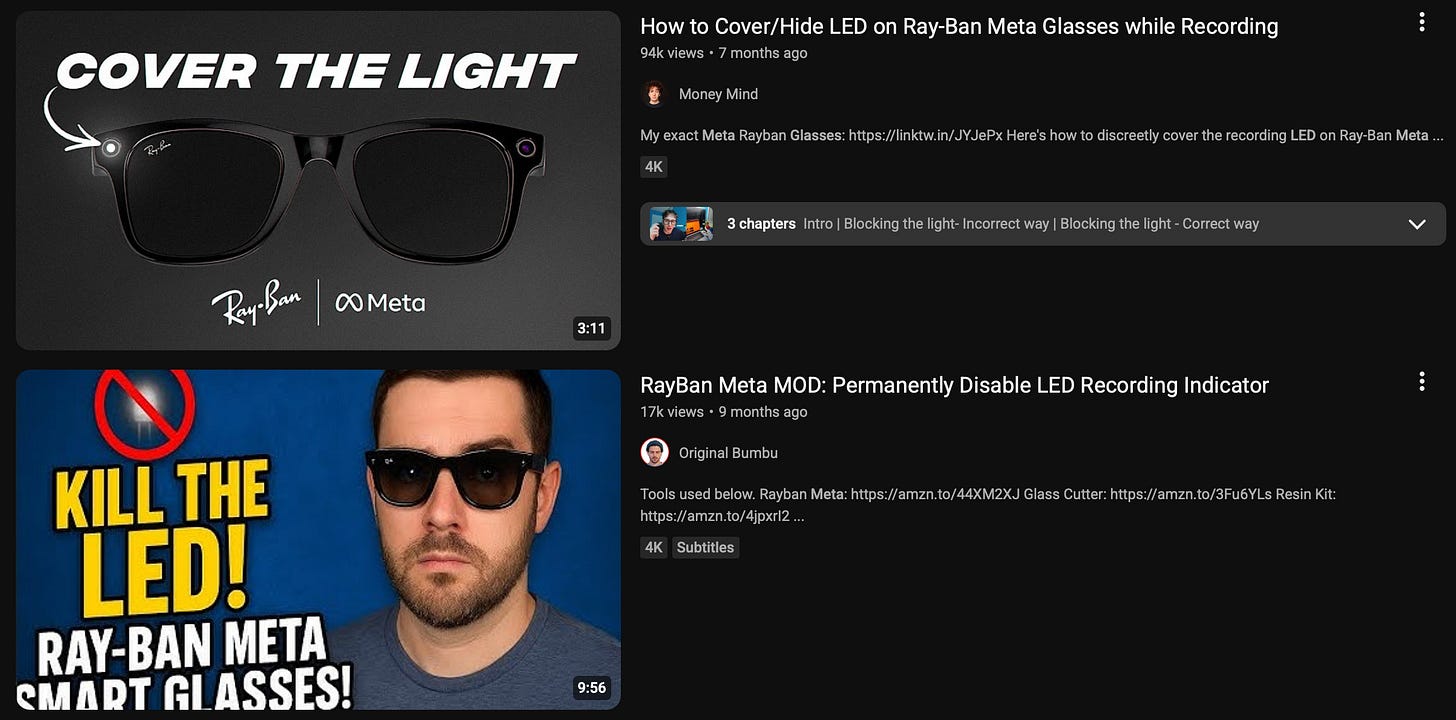

You’ve probably already seen the videos. Women being filmed without their consent by men wearing the glasses. Because the camera is built discreetly into the frame, recording is far less obvious than pulling out a phone. There is meant to be a small LED light that signals when filming is happening, but tutorials online show exactly how to cover or disable it, making it easy to record people without them ever knowing.

So this is not hypothetical.

Journalist Jess Davies, who has been reporting on the impact of tech on women for Glamour, notes that these kinds of videos are on the rise. One woman, Annika, told the magazine she was “being used for content without even knowing the video existed”, adding that the man filming her had deliberately covered the recording light. She described it as part of a wider pattern - the “constant hijacking of tech to harm and humiliate women”.

Meta has said its devices include safeguards such as the LED indicator and tamper detection, and that users are responsible for complying with laws and using the product respectfully. The company acknowledged that a “small number of users” misuse its products, adding that it continues to review ways to improve safety.

However, reporting from the BBC suggests the issue is more widespread. Women have described being unknowingly filmed in public and later finding those interactions uploaded to social media, often attracting large audiences and abusive commentary. One woman said she felt “afraid to go out in public” after discovering a video of her had been posted online without her consent. Another described feeling physically sick after a filmed interaction at the gym was shared and quickly gained thousands of views.

In both cases, the women had no indication they were being recorded at the time. And while the videos themselves were distressing, the aftermath - including sexually explicit and derogatory comments - exacerbated the harm. As one woman put it, the most frightening part was how “out of control” the situation felt.

Advocates say this kind of misuse is not unexpected. Rebecca Hitchen, from the End Violence Against Women Coalition, told the BBC that the use of smart glasses in this way is “sadly, very predictable”, pointing to existing patterns of harassment and abuse directed at women and girls online.

Related articles

Beyond how the technology is already being used, reports of what could come next are even more worrying. Reporting by The Independent highlights warnings from charities about the potential introduction of facial recognition features in smart glasses - tools that could allow wearers to identify people and access information about them in real time.

Domestic abuse organisations, including Refuge and Women’s Aid, have warned that these features pose a “direct and serious” risk to survivors. Emma Pickering, from Refuge, explains that stalking is already a common tactic used by abusers, and that tech enabling instant identification could make it easier to locate and track victims.

Charities argue that women’s safety should be considered a “foundational principle” in the design of new technologies, rather than something addressed after products are released. Data from Refuge shows a significant increase in cases involving technology-facilitated abuse, confirming a broader trend in how digital tools can be misused.

Taken together, these reports point to a wider issue. While smart glasses and AI-powered devices are often presented as innovative and convenient, their real-world impact depends heavily on how they are used - and misused.

There is, of course, nothing inherently harmful about a device that records video or uses AI to interpret the world. But the experiences being shared suggest that, in practice, these tools are entering an online culture where boundaries around consent and privacy are already blurred.

And that is where the major concern lies.

While companies emphasise safeguards and responsible use, the reality described by women in these reports is one where those safeguards can be bypassed, and where the consequences of misuse fall disproportionately on them.

The technology may be new, but the pattern is not.

My concern is privacy and the fundamental disrespect for it. Meta has repeatedly shown us they prioritise profit over privacy protection from Cambridge Analytica to ongoing data breaches. A device that can record, profile you, and harvest information from social platforms to use against you is a major societal concern. As the article shows, this enables abuse against women, and men as well.

Why does Zuckerberg puts a bandage on his laptop webcam?

We need to ask ourselves.

Do we actually want this technology in our daily lives? Why do our regulatory systems allow companies to deploy surveillance tools with minimal safeguards? And what does it say about us that some people are willing to exploit these tools to invade others' lives?

Yes, smart glasses have legitimate uses but constant surveillance isn't one of them.

Here's the real test, If Zuckerberg believes this technology is safe, why not volunteer to be continuously monitored himself? His refusal would reveal what he already knows that this level of surveillance is dangerous.

As a society, we can push back.

We must demand real regulations mandatory consent before recording, criminal penalties for disabling safety features, and accountability for platforms that host non-consensual content. Technology companies cannot be allowed to normalise this level of intrusion into our lives.

I’m so glad people overall, but especially women, are talking about the specific harm to women these glasses are bringing because let’s not kid ourselves and pretend like this won’t harm women disproportionately. It’s creepy, it’s violating, and the men (and women) participating in this kind of circumvention of common courtesy and privacy need to seek therapy to find the root cause of their desire to secretly film women and then mock them online (or whatever else they do with the footage).